Three broken checkout flows, two mismatched shipping calculators, and a contact form that silently drops submissions. That was the Monday morning support queue for one agency partner we onboarded after their previous white-label team scaled from five concurrent builds to fifteen. The builds themselves looked fine in staging. The code was clean enough. But nobody had tested the actual paths real customers walk through after launch, and the result was predictable: client trust evaporated overnight. This is the QA collapse pattern, and it follows a disturbingly consistent trajectory across white-label e-commerce operations.

How the Collapse Actually Happens

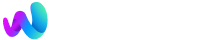

When a white-label team is running three or four WooCommerce builds at a time, somebody clicks through the checkout, somebody eyeballs the contact form, somebody spot-checks responsive layouts on their phone. QA is informal but present. Then the team picks up a seventh build, then a tenth, and the informal process doesn't scale. Nobody announces they're skipping testing. It just stops fitting into the timeline, and because it was never a documented step with assigned ownership, nobody notices the gap until production defects pile up.

This is the outsourcing quality control breakdown that most agencies don't recognize until clients start reporting issues that should have been caught internally. As one analysis of outsourced QA failures documented, outsourcing fails when communication, documentation, and expectations break down. In white-label work, the communication gap is structural. Your client doesn't talk to your dev team. Your dev team doesn't talk to the end users. Testing becomes the thing everybody assumes somebody else is handling.

The financial math accelerates the problem. When you're pricing white-label WordPress work to protect margins, QA time is the first thing that gets compressed because it's the hardest to justify on an estimate. A client sees "development: 20 hours" and nods. They see "testing: 8 hours" and ask why. So teams absorb QA into dev estimates, and when timelines get tight, testing is what gives. The work that gets skipped is the work that was never explicitly scoped, and testing is almost always that work. The consequence is quiet at first: a coupon code that applies twice, a shipping calculator that returns zero for Canadian addresses, a form confirmation email that never fires. Each one feels like a one-off bug. Taken together, they're a system failing.

The E-Commerce Blind Spot

Where this pattern causes the most acute damage is in WooCommerce and Shopify stores, because e-commerce has an unusual property: the critical user path involves real money. A broken hero section is embarrassing. A broken checkout is revenue loss your client can measure to the penny, and they will.

Checkout flow validation requires testing across payment gateways, shipping method combinations, tax calculation edge cases, coupon stacking behavior, and guest versus registered user paths. Manual testing of all those permutations on a single store takes hours. When a white-label team is managing fifteen stores simultaneously, the math is impossible without automation. And the problem compounds: WooCommerce plugin updates ship weekly, each one potentially altering checkout behavior in ways that don't surface until a real customer tries to pay.

CheckView, a WordPress plugin built specifically for this problem, automatically generates test flows for standard WooCommerce product, cart, and checkout pages. It runs real browser sessions on a schedule, verifying that submissions complete and emails send correctly. For agencies handling WordPress testing at scale, a tool like this eliminates the most dangerous category of "we assumed it worked" failures. If your team also handles Shopify store development, similar automated checkout monitoring exists on that platform through tools like Ghost Inspector and Testim, though the WordPress-native tooling has matured faster in this specific area.

The pattern extends beyond checkout. Form submissions, login flows, cart persistence across sessions, and cross-browser rendering all need verification. When agencies dig into why their white-label partnership is actually breaking down, testing gaps are often the root cause. They're obscured by symptoms that look like "the dev team is sloppy" or "communication is bad," but the underlying issue is that nobody verified the deliverable before it shipped.

Automating QA Without Replacing Judgment

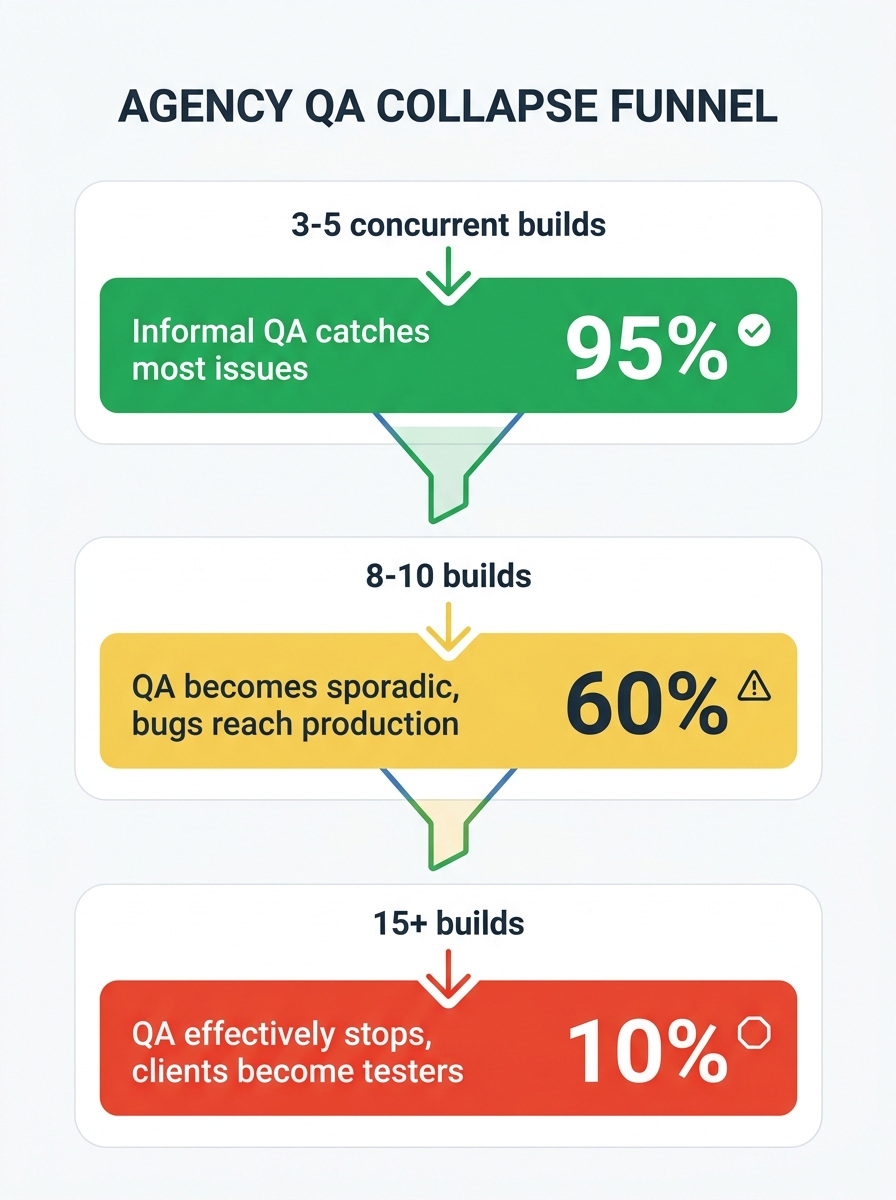

White-label QA automation sounds like the obvious answer, and in many cases it is the right direction, but implementation matters enormously. The teams that succeed with automation treat it as a safety net for predictable paths and keep human attention focused on unpredictable ones.

For predictable paths, scheduled automated testing is the right tool. Platforms like QA Wolf offer end-to-end test automation using Playwright, with deterministic code-based tests that execute as fast as the environment allows. For WordPress-specific work, CheckView handles form and checkout validation with minimal configuration. The goal is catching regressions automatically so your team doesn't need to re-test the same flows manually after every plugin update or theme change. When you're hiring WooCommerce experts for builds, baking these automated checks into the developer onboarding process matters as much as the tooling itself. If a new developer doesn't know that nightly tests run against every active store, they won't understand why the Monday Slack channel is full of failure notifications.

Automated tests that fail silently are arguably worse than no automation at all. They create the illusion of coverage.

The unpredictable paths still need human eyes. How the site feels on a slow mobile connection, whether the product filtering UX makes sense with 200 products instead of 10, whether a specific WooCommerce extension conflicts with the theme's JavaScript: these require exploratory testing that no script can replicate. The best approach is to automate the repetitive validation and redirect your freed-up QA time toward the judgment-dependent work that actually differentiates a good build from a mediocre one.

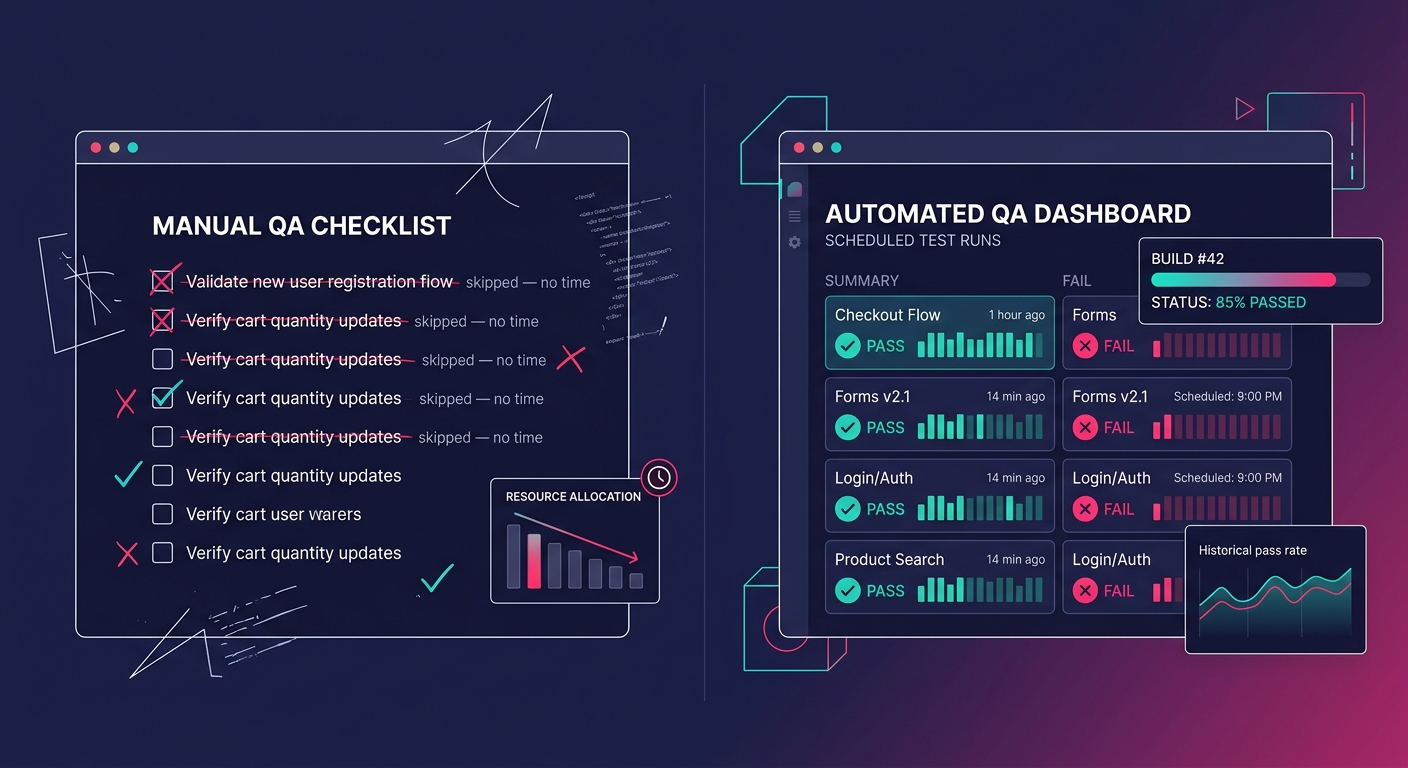

Automated client approval workflows solve the other half of the QA problem: getting sign-off without endless email threads. Power Automate supports multi-stage approval flows where a staging link routes to the right stakeholder, they approve or request changes within the workflow, and the status updates automatically in your project management tool. Approveit does something similar directly inside Slack, which works well for agencies that already live there. The point is removing the ambiguity around who has approved what, because in white-label work, "the client said it looked fine" over a Zoom call is not a defensible QA record when something breaks in production. And this connects to a broader systems issue: when your outsourcing model starts breaking at scale, QA collapse is often the first visible symptom because it's the step with the least explicit ownership. Dev owns the code. Design owns the mockups. Project management owns the timeline. QA ownership, in many white-label setups, belongs to everybody and therefore nobody.

Where This Gets Uncomfortable

The honest version of this story is that automation solves the mechanical problem while leaving the structural one intact. You can set up CheckView, configure nightly Playwright runs, build approval workflows in Power Automate, and still have a QA collapse if nobody is accountable for reviewing the results. Automated tests that fail silently, because the notification goes to a shared Slack channel nobody monitors or because the dashboard exists but nobody checks it, are arguably worse than no automation at all. They create the illusion of coverage.

The teams that actually escape the QA collapse pattern share one trait: they've made a specific person responsible for triaging automated test results every day. That person isn't a "QA lead" in any formal sense at most agencies. It's typically a senior developer or project manager who spends fifteen minutes each morning reviewing overnight test runs and escalating failures before they reach the client. The tooling makes this possible. The habit makes it work. The environments need to be trustworthy enough that test results mean something, which is its own challenge that many agencies underestimate.

There's also an unresolved tension between thoroughness and speed that automation doesn't eliminate. Running a full checkout flow validation suite across three payment gateways, two shipping plugins, and four browser configurations takes real time, even automated. When a client wants a hotfix deployed in two hours, the pressure to skip the automated suite is identical to the old pressure to skip manual testing. The technology changed. The incentive structure didn't. Every workaround — parallel test execution, risk-based test selection, smoke-test-only pipelines for hotfixes — involves a judgment call about acceptable risk that no tool can make for you. White-label agencies treating QA as a line item to compress are building a predictable failure mode into their operations. The collapse is gradual, quiet, and deniable right up until a client's checkout stops processing orders on a Saturday night and nobody finds out until Monday. Automation gives you the infrastructure to prevent that. Whether your team actually uses it remains a management problem, and management problems don't have a plugin.