Clutch.co's April 2026 WordPress developer rankings list over 4,000 companies, each carrying verified reviews, portfolio badges, and capability tags that make every shop look qualified on paper. Agencies shopping for a white-label WordPress partner can spend weeks reading profiles and still end up with a developer who misses deadlines, ships untested code, or ghosts mid-project. The gap between a polished directory listing and actual production-ready output is where an outsourcing quality control checklist earns its keep.

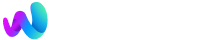

This article traces how a structured partner vetting framework comes together, from the initial trigger that forces agencies to formalize their process, through scoring criteria and pilot projects, to the security and communication layers that separate a real partner from a contract-filler.

The Trigger: When Referrals Stop Being Enough

For most agencies under ten employees, white-label partner selection starts informally. Someone knows a freelancer. A Slack community recommends a shop. The first two or three projects go fine because volume is low and the agency lead personally reviews every deliverable.

The cracks show up around project seven or eight. A WooCommerce build ships with broken cart logic on mobile. A theme customization overwrites core template files instead of using child themes. A "senior WordPress developer" turns out to be a junior dev working under someone else's portfolio. At this point, the agency has already burned client trust and internal hours fixing work they paid someone else to do.

This moment is what forces agencies to write things down. The informal "I know a guy" approach collapses under the weight of multiple concurrent projects, and what replaces it needs to be repeatable.

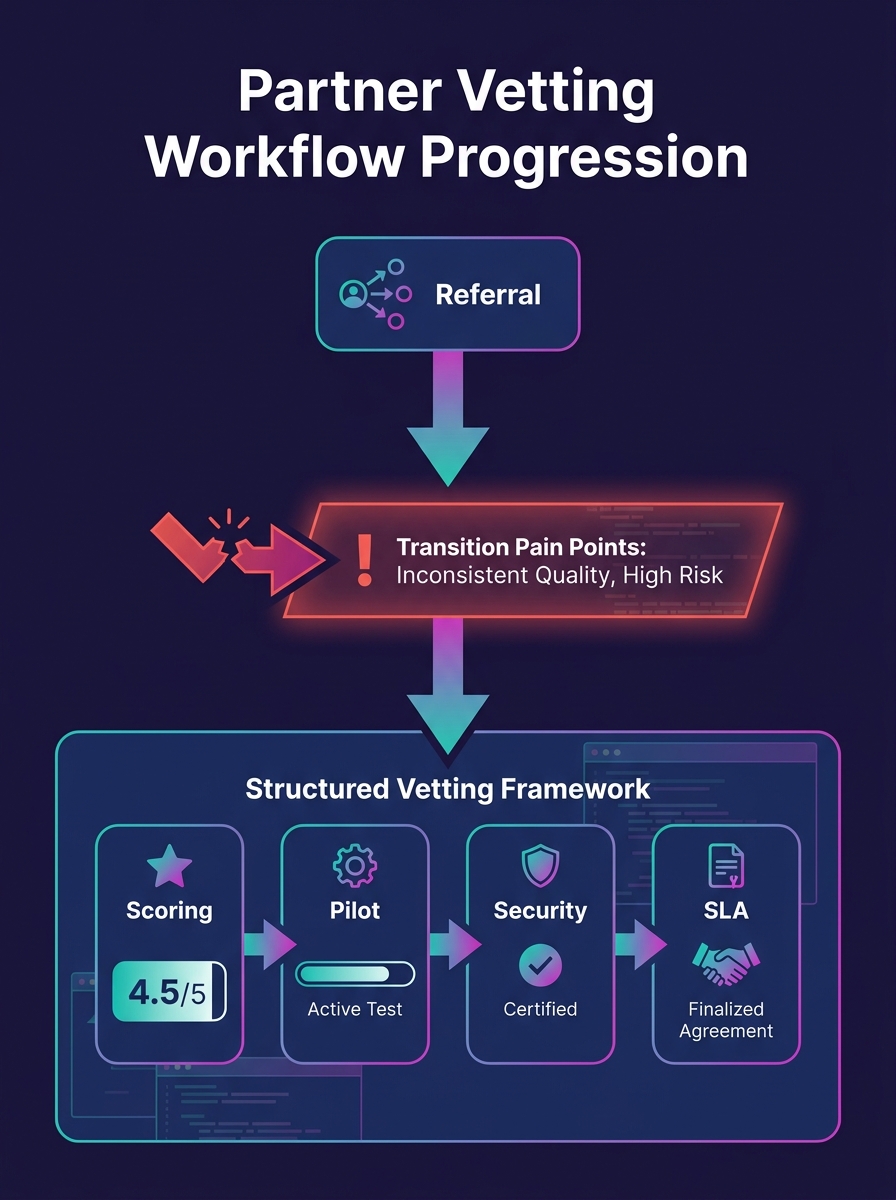

Defining the Scoring Grid

The foundation of any WordPress developer evaluation criteria is a weighted scoring system. Without one, you're comparing apples to opinions.

Industry guidance from Certa's vendor vetting research emphasizes that standardized evaluation criteria are the first step in effective vetting. Agencies that skip this step end up making gut-feel decisions project after project.

Here's the scoring grid that works in practice, adapted from frameworks circulating across agency communities:

High-Weight Criteria (Score 1–10 Each)

- Portfolio relevance: Do they have five or more WordPress projects similar to your typical client work completed in the past 18 months? Generic "we can do anything" portfolios are a yellow flag. You want to see builds in your verticals, whether that's membership sites, ecommerce, or content-heavy editorial layouts.

- QA process documentation: Can they describe their testing workflow? You're looking for code review against WordPress coding standards, responsive testing across breakpoints, performance benchmarks, and security scanning through tools like Wordfence or Patchstack. If they can't articulate their QA process, they probably don't have one.

- NDA willingness and IP handling: White-label work requires the partner to be invisible. If they hesitate on NDAs or want to showcase your clients' sites in their own portfolio, walk away.

- Verified client reviews: Clutch and similar platforms provide verified feedback on communication, timeliness, and deliverable quality. Pay attention to patterns in negative reviews. One complaint about scope creep is human; five complaints about missed deadlines is a system failure.

Medium-Weight Criteria (Score 1–10 Each)

- Communication speed: Do they respond to initial outreach within 24 hours? The courtship phase is when response times are at their best. If they're slow now, they'll be slower when you're six months into a relationship.

- Pricing transparency: Agencies that share clear rate cards and scope definitions signal maturity. Partners who give you a number only after three calls and a "discovery session" for a standard WordPress build are padding process to justify margin.

The high-weight items carry double points in your total. A partner who scores 8/10 on portfolio relevance and QA documentation but 5/10 on pricing transparency is a better bet than someone who quotes fast but can't show you a testing workflow.

Running the Pilot Project

Scores on paper aren't enough. The pilot project is where your outsourcing quality control checklist gets a field test.

The smartest approach is starting with a low-stakes internal project or a minor client update. Nice Digitals recommends evaluating partners on turnaround, build quality, and communication under real conditions rather than hypothetical ones. Agencies that skip this step and hand off a $15,000 client project on day one are gambling with their reputation.

During the pilot, track these five things:

- Requirement gathering accuracy — Did they ask clarifying questions, or did they assume? Partners who build first and ask later produce deliverables that need three rounds of revision.

- Milestone adherence — Did they hit the agreed timeline for each phase, or did everything bunch up at the deadline?

- Deliverable quality at first pass — How much cleanup did your internal team need to do before the work was client-ready?

- Revision responsiveness — When you sent feedback, how fast and how accurately did they implement changes?

- Documentation completeness — Did they hand off a build with notes on custom functions, plugin configurations, and any deviations from the original scope?

If you've worked through the common pitfalls of white-label WordPress services, you know that the pilot phase is where most problems surface early enough to be cheap to fix.

A partner who performs well on a $500 test project but can't articulate how they'd handle ten concurrent builds is a freelancer, not a partner.

Adding the Security and Compliance Layer

Once a candidate passes the pilot, the vetting framework needs a security gate. The WordPress plugin supply chain has become a real attack vector, something we covered in depth when examining how backdoors enter the plugin ecosystem. Your white-label partner's security hygiene directly affects every client site they touch.

The security checklist should cover:

- Version control: Are they using Git? Can they show you commit history and branching strategy? Partners who FTP files directly to production are a liability.

- Update management: How do they handle WordPress core, theme, and plugin updates? Do they test updates in staging before pushing to production?

- Access controls: Do they use role-based access and rotate credentials between projects? A partner with one shared admin login across all client sites is a breach waiting to happen.

- Backup protocols: What's their backup cadence, and can they demonstrate a successful restore?

- Vulnerability scanning: Are they running automated scans, and what tools do they use?

Agencies that take security seriously at the infrastructure and compliance level should expect the same rigor from their partners. If a white-label developer can't answer these questions clearly, they haven't thought about them, which means your clients' data is exposed.

Formalizing Communication and SLAs

The last layer of the framework locks in how the partnership actually operates day to day. This is where most agency partner selection processes fall short, because it feels like bureaucracy when the relationship is new and everyone is enthusiastic.

But communication breakdowns are the number one killer of outsourced partnerships. We've written about why ticket-based workflows fail agencies when there's no feedback loop built into the process. The same principle applies to white-label relationships: if you don't define communication norms upfront, you inherit whatever default the partner uses internally.

Your SLA document should specify:

- Primary point of contact on both sides (not a rotating cast of project managers)

- Response time expectations by channel: Slack messages within 4 hours during business hours, email within 24 hours, emergency escalations within 1 hour

- Project management tooling: whether you're using Asana, Basecamp, ClickUp, or something else, agree on one system. Two people updating two different tools creates information gaps.

- Milestone reporting cadence: weekly progress updates at minimum, with screenshots or staging links for visual work

- Escalation paths: who gets contacted when something goes wrong, and what "wrong" means in concrete terms (missed deadline by 48+ hours, critical bug in production, scope dispute)

- Warranty terms: bug fixes for functional defects typically range from 28 to 90 days post-launch. Define what's covered, what's excluded (client modifications, third-party plugin conflicts), and response times by severity level.

Tip: Before signing an SLA, ask for the partner's average response time over the past 90 days, not their promised response time. Any mature shop tracks this metric and will share it if asked directly.

When you eventually need to hire a dedicated web designer to support growing demand, having these SLA templates already battle-tested with your white-label partner makes onboarding any new resource considerably faster.

The State of Play

Agencies that have built a formal white-label partner vetting process report fewer project overruns, less internal rework, and stronger client retention, because the problems get caught at the evaluation stage instead of the delivery stage.

The framework described here isn't static. Every failed pilot, every SLA violation, every security incident adds a line item to your checklist. The agencies that refine their evaluation criteria after each partnership cycle build institutional knowledge that compounds over time. The ones that wing it keep relearning the same lessons with different vendor names attached.

If you're reviewing your existing partnerships or starting fresh, the scoring grid and pilot project sequence give you a repeatable process that tightens with each iteration. And if you want to see what production-quality WordPress work actually looks like from the agency side, our website portfolio shows the standard we hold our own builds to, the same standard we apply when evaluating anyone we'd trust with client work.